In the natural language processing (NLP) field, large language models (LLM) have emerged as powerful tools. Unlike traditional AI algorithms trained for narrow, specific tasks, LLMs are trained on vast datasets of textual data, allowing them to understand and generate human language with unprecedented sophistication. The most well-known example of an LLM is OpenAI's GPT-3, which was released in 2020.

Trained on 45 terabytes of internet text data, GPT-3 achieved state-of-the-art performance on many natural language tasks with zero-shot learning, meaning it could apply its language skills to new tasks without additional training. Microsoft and Google have since released their LLMs — TuringNLG and Parti — with over 100 billion and 540 billion parameters, respectively.

One factor behind its rise is the availability of vast computational resources, especially GPUs and specialized AI accelerators like TPUs. Models are trained using self-supervised learning on massive datasets scraped from the internet, with techniques like attention and transformers, allowing models to learn complex textual representations.

LLMs represent a breakthrough in AI's communication ability, paving the way for other applications like question-answering systems, machine translation, text summarization, and more. Ultimately, it transformed the NLP field and how we interact with technology.

In this article, we will provide an overview of LLMs' capabilities and limitations. We will discuss breakthrough applications like content creation, virtual assistants, and analytics.

In addition, we will delve into algorithmic bias, privacy, and transparency challenges that you should address proactively. We aim to equip readers with insights to evaluate where LLMs can safely drive value while identifying areas that demand caution.

.png)

Enhancing natural language processing

Natural language processing (NLP) is a branch of artificial intelligence that analyzes and understands human language. In recent years, NLP has advanced due to LLMs pushing the boundaries of what's possible in language understanding and generation.

Traditional NLP techniques relied on rule-based systems and hand-crafted features, which often struggled to capture the nuances and complexities of language. With the advent of LLMs, NLP has significantly improved accuracy and performance.

Due to their sheer size and training on massive datasets, LLMs can capture complex nuances and dependencies in language much better than traditional NLP models. That translates to improved accuracy in machine translation, text summarization, sentiment analysis, question answering, entity recognition, and part-of-speech tagging.

- Machine translation: More accurate and natural-sounding translations across languages.

- Text summarization: Capturing key points and generating concise summaries of complex texts.

- Sentiment analysis: Understanding the emotional tone and underlying sentiment in text data.

- Question answering: Providing comprehensive and informative answers to open-ended questions.

- Entity recognition: Identifying and categorizing specific pieces of information within text.

- Part-of-speech tagging: Assigning grammatical labels (tags) to each word in a sentence.

However, LLMs are not limited to specific tasks. They can be improved to perform various NLP tasks with minimal adjustments, reducing the need for specialized models for each domain. That flexibility makes them valuable in various NLP applications, including chatbots, virtual assistants, and automated customer support systems.

Despite its contributions to NLP, LLMs come with challenges. LLMs trained on biased data can perpetuate harmful stereotypes if not carefully addressed. Understanding how LLMs arrive at their outputs remains challenging, raising concerns about trust and reliability. LLMs' powerful capabilities require responsible development and safeguards to prevent harmful applications.

LLMs represent a significant leap forward in NLP, enabling us to interact with language in richer and more meaningful ways. As we address the challenges and work towards responsible development, they hold immense potential to transform how we communicate, access information, and create content.

.png)

Applications in content generation

Large language models have revolutionized content generation by enabling automated text generation at scale. It can generate different content formats like blog posts, articles, marketing copy, product descriptions, poems, scripts, and musical pieces.

It can also personalize content based on user preferences, demographics, or previous interactions. LLMs can also translate and generate content in multiple languages, expanding reach and accessibility.

Content generation powered by large language models also has vast potential in various domains. It can automate the creation of news articles (summarize news articles or generate reports based on factual data), product descriptions (generate personalized ad copy, product descriptions, or email marketing content), and social media posts (create engaging captions and posts for social media platforms).

LLMs can also assist content creators in various ways, saving them time and effort. They can suggest ideas, write outlines, or generate drafts to overcome creative hurdles. They can also assist with finding relevant data and citations for factual accuracy. Lastly, they can help identify grammar errors, typos, and stylistic inconsistencies.

However, ensuring the responsible use of large language models in content generation is important. Quality control, plagiarism detection, and maintaining ethical standards are essential considerations in harnessing the power of these models for content creation.

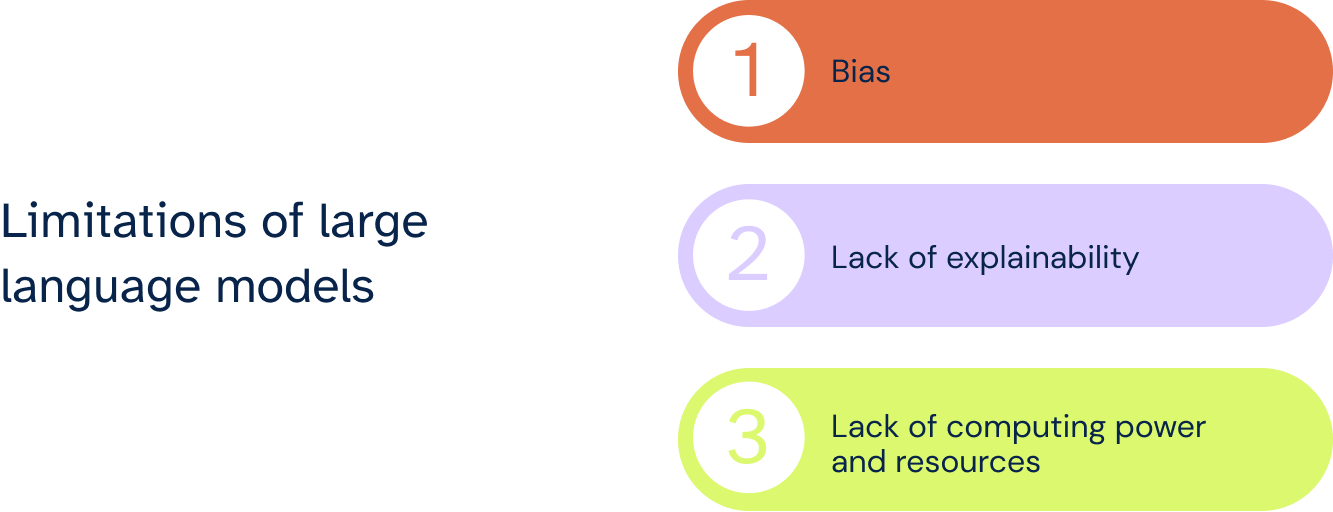

Limitations of large language models

While large language models have undoubtedly revolutionized natural language processing, they also have limitations. Let's analyze these limitations deeply!

Bias

One major limitation is bias, which can manifest in various ways. It can impact the outputs of LLMs and raise concerns about fairness, ethics, and responsible use.

LLMs are trained on massive datasets of text and code, which reflect societal biases and prejudices. This data can include stereotypes, discriminatory language, and unfair representations of specific groups. Moreover, the algorithms used to train and operate LLMs might have built-in inherent biases, unintentionally amplifying biases.

That can result in offensive, harmful, or discriminatory outputs towards specific groups, perpetuating existing inequalities. Biases present in the training data can also lead to factually incorrect or misleading outputs.

To address bias, carefully choose and clean training data to remove biases and promote diversity. Develop algorithms that explicitly consider fairness metrics and mitigate bias during training and inference. Implement human oversight and provide explainable AI solutions to identify and address potential biases in LLM outputs.

Establish ethical guidelines and regulations for developing and using LLMs to ensure responsible and unbiased applications.

Bias in LLMs is a complex issue with significant consequences. By acknowledging its limitations, actively addressing bias through various methods, and prioritizing ethical development, you can ensure that LLMs are used responsibly and contribute to a more inclusive and equitable future.

Lack of explainability

While LLMs have impressive capabilities, their "black box" nature poses another limitation: lack of explainability. That means we often don't understand why they generate certain outputs, limiting their usability and raising concerns about accountability and trust.

LLMs, particularly deep learning models, have intricate internal structures with millions of parameters influencing their decisions. Unraveling these intricate relationships to understand how they arrive at an output is incredibly challenging. LLM predictions are often based on complex statistical calculations across numerous data points.

These calculations lack clear, logical steps, making pinpointing the reasoning behind a specific output difficult.

During training, the primary goal is often to achieve high accuracy on specific tasks. Explainability might not be explicitly prioritized, leading to less transparent models.

Because of these challenges, users struggle to trust LLM outputs when they don't understand their reasoning, hindering their adoption in critical applications where transparency is essential. Identifying and fixing errors without understanding why an LLM makes a mistake can be difficult, hindering improvement and development.

Identifying and mitigating potential biases woven into LLM outputs also becomes challenging without understanding the internal decision-making processes. That lack of explainability can make LLMs vulnerable to manipulation and misuse for malicious purposes like generating fake news or biased content.

One way to address that lack of explainability is through explainable AI (XAI) techniques — developing tools and methods to make LLM decisions more transparent and understandable. That can involve feature attribution, counterfactual explanations, and attention visualization techniques.

You can also hire human experts to analyze LLM outputs, interpret their reasoning, and provide contextually relevant explanations. Explore LLM models that are inherently more interpretable by design, potentially sacrificing performance for transparency.

Lack of explainability remains another major hurdle for LLMs. By actively researching and implementing explainable AI techniques, fostering human collaboration, and adopting responsible development practices, we can unlock the full potential of LLMs while ensuring their safe and ethical use.

Lack of computing power and resources

Additionally, large language models require substantial computing power and resources, making them inaccessible for individuals or organizations with limited resources.

Training requires massive amounts of data, complex algorithms, and powerful computing infrastructure, leading to significant costs, potentially limiting accessibility and hindering research and development efforts. Running large LLMs in real-world applications also often requires extensive resources. That can restrict their use in resource-constrained environments or applications requiring real-time response.

The high energy consumption associated with training and running LLMs also raises concerns about their environmental impact. More efficient training methods and hardware optimization must be explored to address this. LLM's resource-intensive nature creates barriers to entry, limiting the diversity of developers and applications. Addressing this requires finding ways to make LLMs more resource-efficient and accessible.

One potential solution for scaling LLMs is investing in more powerful and efficient computing hardware, including specialized AI accelerators.

You can also look into smaller, more efficient LLM architectures that maintain performance while reducing resource requirements. Cloud-based access to pre-trained LLMs can also democratize their use and reduce individual resource burdens. Lastly, leveraging pre-trained models and fine-tuning them for specific tasks can be more resource-efficient than training from scratch.

LLM's computational demands significantly hinder their wider adoption and responsible development. By exploring diverse solutions like hardware advancements, model optimization, and responsible resource management, we can unlock the full potential of LLMs while ensuring their sustainability and accessibility.

Guiding LLMs Thoughtfully

While large language models represent an incredible technological achievement, they have significant limitations that require further research and consideration.

Its lack of grounding in the physical world and susceptibility to inheriting biases from flawed training data can result in hallucinated content, unfair discrimination, and potential harm if deployed without oversight.

Moving forward, efforts to improve LLMs should focus on developing interpretability and transparency around their decision-making processes. Techniques like semi-supervised learning on carefully curated datasets could help impart common sense, social awareness, and ethics.

Rigorous testing protocols are needed to probe their reliability across diverse demographics and use cases. Accessibility constraints should be coded directly into models to align their capabilities with human values.

By acknowledging current weaknesses alongside their strengths, LLMs can be oriented to amplify, rather than replace, uniquely human creativity and wisdom. Their future development and deployment should proceed with caution and care under a responsible and ethical AI framework.

Used judiciously, LLMs may assist humans in many beneficial ways, but we must remain watchful of their limitations to guide them as helpful tools rather than unconstrained agents acting upon the world.

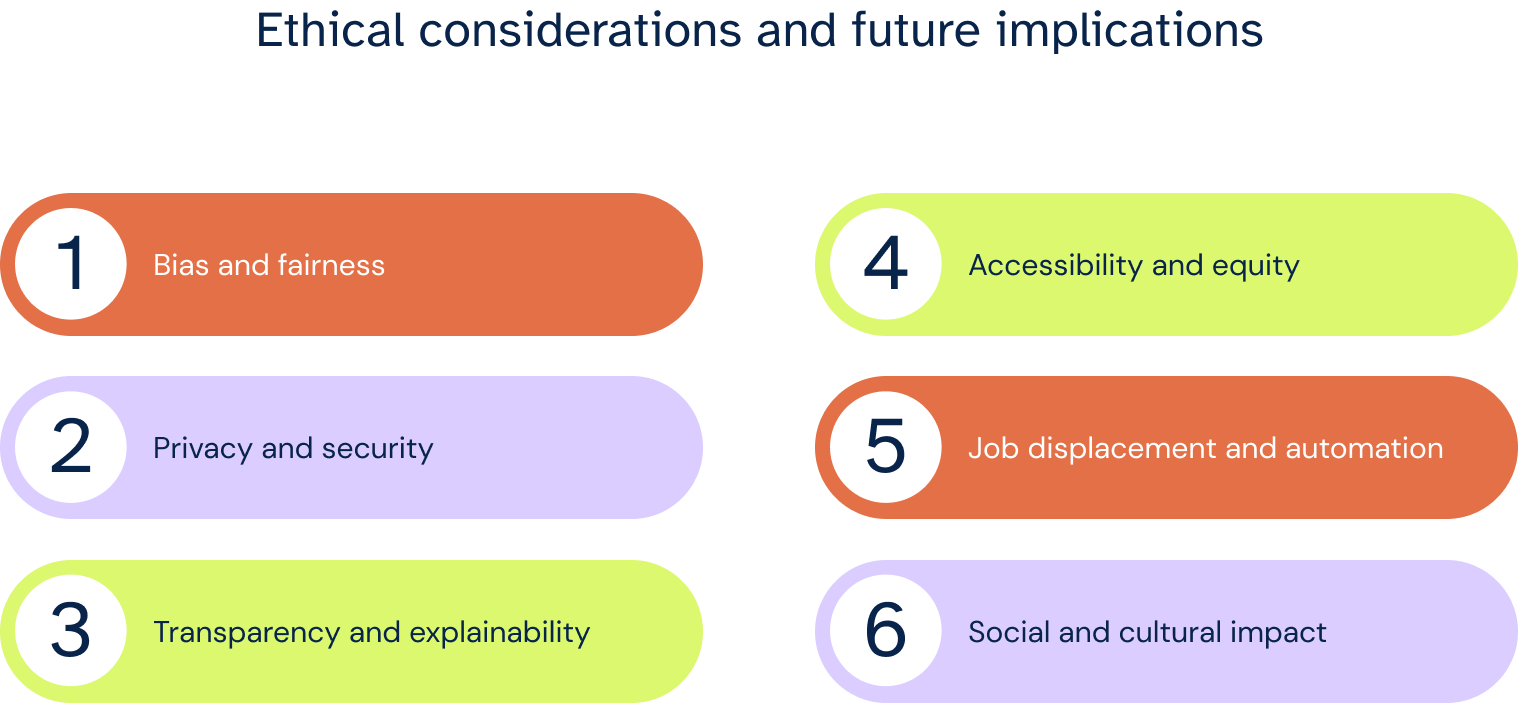

Ethical considerations and future implications

Ethical considerations become paramount as large language models evolve and become more powerful. LLMs trained on biased data can perpetuate harmful stereotypes and discriminatory outputs. Mitigating data bias through careful curation and representation is crucial. Its design and optimization can inadvertently introduce bias. Regular auditing and transparency around fairness metrics are essential.

LLMs trained on personal data also raise privacy concerns, requiring clear consent, data anonymization, and robust security measures. Its ability to generate realistic text can be misused to spread harmful content. Fact-checking, user education, and safeguards against malicious use are necessary.

LLM's complex internal workings make it difficult to understand how they make their decisions, hindering accountability and trust. XAI techniques are key to transparency.

Training and running LLMs also require significant resources, potentially limiting access and creating an equity gap. Exploring resource-efficient models and democratizing access is essential. LLMs automating tasks currently performed by humans also raise concerns about job displacement and its impact on livelihoods. Reskilling and retraining initiatives are crucial.

Its widespread use could influence how people communicate and interact, raising questions about authenticity and real-world interaction. LLMs require responsible governance and ethical frameworks to guide its development and use.

To summarize, responsible development and thoughtful consideration of these ethical considerations are essential to ensure LLMs benefit society without exacerbating existing inequalities or causing harm.

Written by Keith McGrath

Keith McGrath is a seasoned professional with a wealth of experience in architecting and designing real-time intraday derivatives pricing and risk systems. His expertise spans commodities, interest rate derivatives, and credit derivatives businesses, where he has played pivotal roles in developing global risk and PnL attribution systems. Keith is known for his direct interactions with trading desks, driving innovative solutions that empower effective decision-making. - Keith possesses a profound understanding of derivatives valuation, market risk, and PnL attribution across various asset classes, making him a trusted authority in financial markets. - Keith's remarkable skills extend to architecting and designing artificial intelligence solutions for Governance, Risk, and Compliance (GRC) applications, enhancing regulatory and operational efficiency. - Keith excels in volatility models and stochastic processes, enabling the development of advanced pricing and risk models that optimize profitability. - Proficient in C#, C++, WPF, Java, and Python, Keith leverages his technical versatility to solve complex financial challenges. Keith McGrath's unique blend of financial expertise, AI acumen, and leadership skills make him an invaluable asset for organizations seeking to enhance their derivatives pricing and risk systems while integrating cutting-edge AI solutions into their GRC processes. His commitment to innovation and proven track record in both domains set him apart in the finance and technology sectors.